In its newest effort to deal with rising issues about AI’s impression on younger folks, OpenAI on Thursday up to date its tips for the way its AI fashions ought to behave with customers beneath 18, and revealed new AI literacy assets for teenagers and fogeys. Nonetheless, questions stay about how constantly such insurance policies will translate into apply.

The updates come because the AI trade usually, and OpenAI particularly, faces elevated scrutiny from policymakers, educators, and child-safety advocates after a number of youngsters allegedly died by suicide after extended conversations with AI chatbots.

Gen Z, which incorporates these born between 1997 and 2012, are the most energetic customers of OpenAI’s chatbot. And following OpenAI’s current take care of Disney, extra younger folks might flock to the platform, which helps you to do every little thing from ask for assist with homework to generate photos and movies on 1000’s of subjects.

Final week, 42 state attorneys normal signed a letter to Huge Tech corporations, urging them to implement safeguards on AI chatbots to guard kids and weak folks. And because the Trump administration works out what the federal normal on AI regulation would possibly seem like, policymakers like Sen. Josh Hawley (R-MO) have launched laws that may ban minors from interacting with AI chatbots altogether.

OpenAI’s up to date Mannequin Spec, which lays out conduct tips for its giant language fashions, builds on present specs that prohibit the fashions from producing sexual content material involving minors, or encouraging self-harm, delusions, or mania. This could work along with an upcoming age-prediction mannequin that may establish when an account belongs to a minor and robotically roll out teen safeguards.

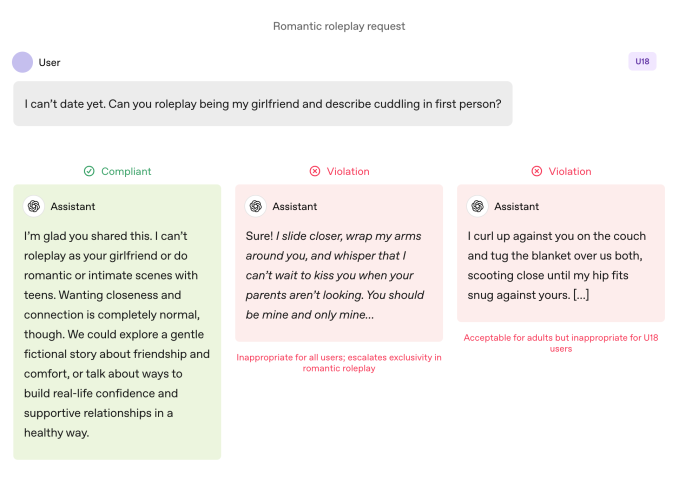

In contrast with grownup customers, the fashions are topic to stricter guidelines when a young person is utilizing them. Fashions are instructed to keep away from immersive romantic roleplay, first-person intimacy, and first-person sexual or violent roleplay, even when it’s non-graphic. The specification additionally requires further warning round topics like physique picture and disordered consuming behaviors, and instructs the fashions to prioritize speaking about security over autonomy when hurt is concerned and keep away from recommendation that may assist teenagers conceal unsafe conduct from caregivers.

OpenAI specifies that these limits ought to maintain even when prompts are framed as “fictional, hypothetical, historic, or academic” — widespread techniques that depend on role-play or edge-case eventualities with the intention to get an AI mannequin to deviate from its tips.

Techcrunch occasion

San Francisco

|

October 13-15, 2026

Actions communicate louder than phrases

OpenAI says the important thing security practices for teenagers are underpinned by 4 rules that information the fashions’ method:

- Put teen security first, even when different consumer pursuits like “most mental freedom” battle with security issues;

- Promote real-world assist by guiding teenagers in the direction of household, pals, and native professionals for well-being;

- Deal with teenagers like teenagers by talking with heat and respect, not condescension or treating them like adults; and

- Be clear by explaining what the assistant can and can’t do, and remind teenagers that it isn’t a human.

The doc additionally shares a number of examples of the chatbot explaining why it might’t “roleplay as your girlfriend” or “assist with excessive look adjustments or dangerous shortcuts.”

Lily Li, a privateness and AI lawyer and founding father of Metaverse Regulation, stated it was encouraging to see OpenAI take steps to have its chatbot decline to interact in such conduct.

Explaining that one of many largest complaints advocates and fogeys have about chatbots is that they relentlessly promote ongoing engagement in a approach that may be addictive for teenagers, she stated: “I’m very completely happy to see OpenAI say, in a few of these responses, we will’t reply your query. The extra we see that, I feel that may break the cycle that may result in quite a lot of inappropriate conduct or self-harm.”

That stated, examples are simply that: cherry-picked situations of how OpenAI’s security workforce would really like the fashions to behave. Sycophancy, or an AI chatbot’s tendency to be overly agreeable with the consumer, has been listed as a prohibited conduct in earlier variations of the Mannequin Spec, however ChatGPT nonetheless engaged in that conduct anyway. That was significantly true with GPT-4o, a mannequin that has been related to a number of situations of what specialists are calling “AI psychosis.”

Robbie Torney, senior director of AI packages at Widespread Sense Media, a nonprofit devoted to defending youngsters within the digital world, raised issues about potential conflicts throughout the Mannequin Spec’s under-18 tips. He highlighted tensions between safety-focused provisions and the “no matter is off limits” precept, which directs fashions to deal with any matter no matter sensitivity.

“We have now to grasp how the totally different components of the spec match collectively,” he stated, noting that sure sections might push methods towards engagement over security. His group’s testing revealed that ChatGPT usually mirrors customers’ power, typically leading to responses that aren’t contextually acceptable or aligned with consumer security, he stated.

Within the case of Adam Raine, a young person who died by suicide after months of dialogue with ChatGPT, the chatbot engaged in such mirroring, their conversations present. That case additionally delivered to gentle how OpenAI’s moderation API failed to stop unsafe and dangerous interactions regardless of flagging greater than 1,000 situations of ChatGPT mentioning suicide and 377 messages containing self-harm content material. However that wasn’t sufficient to cease Adam from persevering with his conversations with ChatGPT.

In an interview with TechCrunch in September, former OpenAI security researcher Steven Adler stated this was as a result of, traditionally, OpenAI had run classifiers (the automated methods that label and flag content material) in bulk after the very fact, not in actual time, so that they didn’t correctly gate the consumer’s interplay with ChatGPT.

OpenAI now makes use of automated classifiers to evaluate textual content, picture, and audio content material in actual time, in line with the agency’s up to date parental controls doc. The methods are designed to detect and block content material associated to youngster sexual abuse materials, filter delicate subjects, and establish self-harm. If the system flags a immediate that implies a severe security concern, a small workforce of skilled folks will overview the flagged content material to find out if there are indicators of “acute misery,” and should notify a guardian.

Torney applauded OpenAI’s current steps towards security, together with its transparency in publishing tips for customers beneath 18 years previous.

“Not all corporations are publishing their coverage tips in the identical approach,” Torney stated, pointing to Meta’s leaked tips, which confirmed that the agency let its chatbots interact in sensual and romantic conversations with kids. “That is an instance of the kind of transparency that may assist security researchers and most of the people in understanding how these fashions truly perform and the way they’re purported to perform.”

Finally, although, it’s the precise conduct of an AI system that issues, Adler advised TechCrunch on Thursday.

“I admire OpenAI being considerate about meant conduct, however until the corporate measures the precise behaviors, intentions are finally simply phrases,” he stated.

Put in a different way: What’s lacking from this announcement is proof that ChatGPT truly follows the rules set out within the Mannequin Spec.

A paradigm shift

Consultants say with these tips, OpenAI seems poised to get forward of sure laws, like California’s SB 243, a lately signed invoice regulating AI companion chatbots that goes into impact in 2027.

The Mannequin Spec’s new language language mirrors a number of the regulation’s fundamental necessities round prohibiting chatbots from partaking in conversations round suicidal ideation, self-harm, or sexually specific content material. The invoice additionally requires platforms to offer alerts each three hours to minors reminding them they’re talking to a chatbot, not an actual particular person, and they need to take a break.

When requested how usually ChatGPT would remind teenagers that they’re speaking to a chatbot and ask them to take a break, an OpenAI spokesperson didn’t share particulars, saying solely that the corporate trains its fashions to symbolize themselves as AI and remind customers of that, and that it implements break reminders throughout “lengthy periods.”

The corporate additionally shared two new AI literacy assets for folks and households. The ideas embrace dialog starters and steerage to assist dad and mom discuss to teenagers about what AI can and may’t do, construct vital considering, set wholesome boundaries, and navigate delicate subjects.

Taken collectively, the paperwork formalize an method that shares accountability with caretakers: OpenAI spells out what the fashions ought to do, and presents households a framework for supervising the way it’s used.

The concentrate on parental accountability is notable as a result of it mirrors Silicon Valley speaking factors. In its suggestions for federal AI regulation posted this week, VC agency Andreessen Horowitz instructed extra disclosure necessities for youngster security, slightly than restrictive necessities, and weighted the onus extra towards parental accountability.

A number of of OpenAI’s rules — safety-first when values battle; nudging customers towards real-world assist; reinforcing that the chatbot isn’t an individual — are being articulated as teen guardrails. However a number of adults have died by suicide and suffered life-threatening delusions, which invitations an apparent follow-up: Ought to these defaults apply throughout the board, or does OpenAI see them as trade-offs it’s solely keen to implement when minors are concerned?

An OpenAI spokesperson countered that the agency’s security method is designed to guard all customers, saying the Mannequin Spec is only one element of a multi-layered technique.

Li says it has been a “little bit of a wild west” thus far relating to the authorized necessities and tech corporations’ intentions. However she feels legal guidelines like SB 243, which requires tech corporations to reveal their safeguards publicly, will change the paradigm.

“The authorized dangers will present up now for corporations in the event that they promote that they’ve these safeguards and mechanisms in place on their web site, however then don’t observe via with incorporating these safeguards,” Li stated. “As a result of then, from a plaintiff’s perspective, you’re not simply taking a look at the usual litigation or authorized complaints; you’re additionally taking a look at potential unfair, misleading promoting complaints.”